Differences between NLP, NLU, and NLG: A professional explanation of how AI comprehends and generates text

AI’s ability to understand human language and engage in natural conversation is built on a core technology known as Natural Language Processing (NLP). With the rise of advanced systems such as ChatGPT, the quality of machine-generated text has improved dramatically, enabling interactions that are nearly indistinguishable from human communication.

Despite their growing importance, the terms NLP (Natural Language Processing), NLU (Natural Language Understanding), and NLG (Natural Language Generation) are often used interchangeably, leading to confusion about their precise roles and functions.

This article provides a systematic explanation of these three concepts, detailing how AI analyzes human language, derives meaning, and produces coherent responses. By understanding how these technologies interact, readers can gain deeper insights into the mechanisms behind AI-driven conversation and text generation.

1. Overview of Natural Language Processing (NLP)

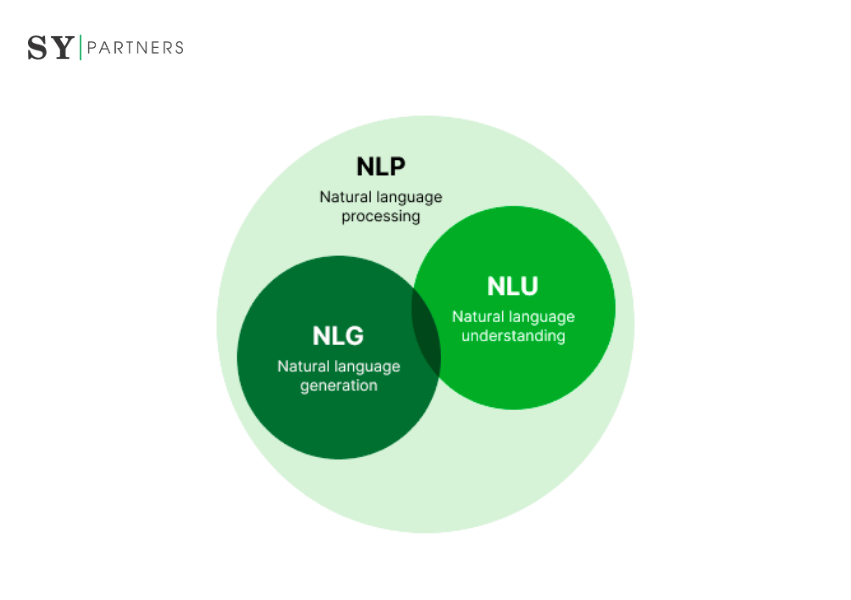

Natural Language Processing (NLP) is an area of AI that enables computers to interpret, analyze, and generate human language. It extracts meaning from text or speech and is used in applications such as translation, speech recognition, and search prediction.

NLP is generally divided into two major components:

- NLU (Natural Language Understanding): Techniques for interpreting context, semantics, and intent

- NLG (Natural Language Generation): Techniques for producing natural and contextually appropriate text

Representative Techniques and Applications

| Technique | Application |

|---|---|

| Morphological Analysis | Word segmentation, part-of-speech tagging (e.g., Japanese tokenization) |

| Syntactic Parsing | Understanding sentence structure (e.g., subject–predicate relationships) |

| Semantic Analysis | Inferring meaning based on vocabulary and context |

| Sentiment Analysis | Detecting emotions in social media posts and reviews |

| Chatbots | Automating customer support and conversational interactions |

Advances in these technologies have enabled more natural and accurate AI dialogues, fostering rapid progress in various language-processing systems.

How Natural Language Processing (NLP) Works

Natural Language Processing (NLP) typically operates through four main stages: (1) Morphological Analysis, (2) Syntactic Parsing, (3) Semantic Analysis, and (4) Contextual Analysis. The following explains each stage in detail.

1. Morphological Analysis

In this stage, sentences are divided into their smallest meaningful units, or morphemes, and each word is annotated with information such as its part of speech. This allows AI to learn the roles and relationships of vocabulary, forming the foundational understanding necessary for natural language comprehension and generation.

2. Syntactic Parsing

Building on morphological analysis, syntactic parsing examines the relationships between words to construct the sentence’s grammatical structure. Common approaches include dependency parsing and constituency parsing, with dependency parsing being predominantly used in Japanese NLP. This step enables the AI to understand how words function together to convey meaning.

3. Semantic Analysis

Semantic analysis selects the most accurate and natural interpretation from multiple possible readings of a sentence derived from syntactic parsing. This stage represents the AI’s ability to "understand the meaning" of the text at a sentence level.

4. Contextual Analysis

To grasp the flow of a text, contextual analysis evaluates relationships across multiple sentences. Accurate context understanding requires sophisticated reasoning and knowledge representation. Recent advances in machine learning and neural networks have significantly improved the precision of this stage, enabling AI to generate more coherent and contextually appropriate responses.

2. Overview of Natural Language Understanding (NLU)

Natural Language Understanding (NLU) is a subfield of NLP that enables computers to “understand” human language by extracting meaning and intent from text. While NLP covers the broader processing of language data, NLU specifically focuses on context comprehension and intent recognition.

The primary components of NLU include:

- Intent Recognition: Identifying the user’s purpose (e.g., “I want to order a pizza” → order intent).

- Entity Extraction: Extracting specific information from text (e.g., “Reservation at 2 PM” → time and schedule).

- Contextual Understanding: Generating responses based on conversation history and situational context.

NLU is widely applied in voice assistants (e.g., Siri, Alexa), chatbots, sentiment analysis, and machine translation, enabling more natural interactions and improving overall user experience.

How NLU Works and Its Core Technologies

Natural Language Understanding (NLU) is the process of converting human language into a machine-readable form. It involves multiple stages and leverages advanced techniques to accurately interpret meaning, intent, and context across diverse languages and cultural settings.

1. Text Preprocessing

The first step prepares input data (text or speech) for machine processing:

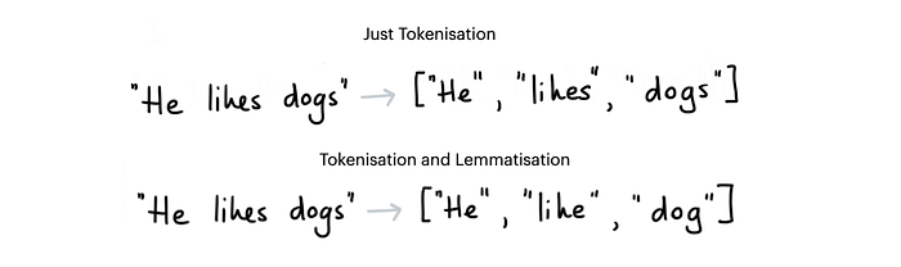

- Tokenization: Splitting sentences into words or phrases. In languages like Japanese, morphological analysis is used, while in English, word segmentation and punctuation handling are common.

- Normalization: Standardizing capitalization, punctuation, diacritics, and removing noise.

- Stop-word Removal: Removing frequently occurring words with little semantic value (e.g., the, is, and in English, or particles in other languages).

2. Feature Extraction

Linguistic features are quantified to capture semantic meaning:

- Word Embeddings: Representing words as semantic vectors using models like Word2Vec, GloVe, or multilingual BERT (mBERT) for cross-lingual understanding.

- Syntactic Analysis: Parsing grammatical structure to understand relationships between words, enabling applications like question answering in multiple languages.

- Semantic Analysis: Interpreting word meanings in context, supporting machine translation, multilingual sentiment analysis, and intent recognition.

3. Intent and Entity Recognition

NLU identifies user intent and extracts relevant entities.

For example:

- “Book a flight from New York to London next Friday” → Intent: Book flight, Entities: New York, London, next Friday.

- “Reserve a table for four at 7 PM at a Paris restaurant” → Intent: Restaurant reservation, Entities: Paris, 7 PM, party of four.

Transformer-based models such as BERT and XLM-R are widely used to handle multilingual patterns and cultural nuances in this step.

4. Context Integration

The system integrates conversation history and external knowledge to refine understanding.

For instance:

- User: “What’s the weather in Tokyo tomorrow?”

- User (follow-up): “And how about Osaka?”

The system maintains the intent (Retrieve weather forecast) while recognizing the new entity (Osaka), allowing smooth, context-aware multi-turn dialogue across global locations.

3. Overview of Natural Language Generation (NLG)

Natural Language Generation (NLG) converts structured or unstructured data into coherent human-readable text or speech.

Examples include:

- Weather reporting: “Tomorrow, Paris will be sunny with a high of 23°C.”

- E-commerce: “Your order has been shipped and is expected to arrive on Friday.”

- Finance: Automatically generating global market summaries: “The Nikkei closed up 1.2%, while the S&P 500 fell 0.5% today.”

By combining NLU and NLG, AI can produce natural, context-aware responses in multilingual environments. NLU handles understanding, while NLG handles expression, enabling applications like international chatbots, voice assistants, and automated report generation for diverse global audiences.

How NLG Works and Its Core Technologies

Natural Language Generation (NLG) is a multi-stage process that converts data into natural, human-like language. The following outlines the main steps and key techniques.

1. Content Analysis

The first stage analyzes input data and extracts the key information required for generation.

Example: From sales data, select highlights such as “10% year-over-year increase.”

2. Data Understanding

The extracted information is interpreted to understand its meaning, trends, and contextual background.

Techniques include machine learning (e.g., transformer models) and rule-based approaches to capture relationships within the data.

Example: From customer reviews, extract insights such as “high satisfaction levels.”

3. Document Structuring

This step designs the overall structure and sequence of the output document.

Example: A financial report might follow the flow: Executive Summary → Sales Analysis → Future Outlook.

4. Sentence Aggregation

Related information is combined to reduce redundancy and produce natural sentences.

Example: “Sales increased by 10%” and “profit margins improved” → “Sales increased by 10%, and profit margins also improved.”

5. Grammatical Structuring

Grammar rules and semantic structure are applied to create coherent and readable sentences. Vocabulary choices and sentence flow are optimized for naturalness.

6. Language Presentation

The final output is formatted according to the desired medium—report, email, or speech—and adjusted for style or tone depending on the audience.

Example: Formal for business reports; casual for consumer-facing communications.

Key NLG Techniques

- Template-based NLG: Inserts data into predefined sentence templates; simple and accurate but less flexible.

- Statistical NLG: Uses probabilistic models (e.g., Hidden Markov Models) to generate text.

- Neural NLG: Employs deep learning (e.g., Transformers, GPT series) to produce contextually appropriate, natural text; currently the dominant approach.

- Reinforcement Learning: Optimizes dialogue or creative content based on user feedback or reward signals.

4. Differences Between NLP, NLU, and NLG

Understanding the distinct roles of NLP, NLU, and NLG clarifies how AI reads, understands, and expresses human language.

| Aspect | NLP (Natural Language Processing) | NLU (Natural Language Understanding) | NLG (Natural Language Generation) |

|---|---|---|---|

| Purpose | Structure language data for machine processing | Interpret meaning and intent | Express understood information in natural language |

| Role | Reading | Understanding | Speaking / Writing |

| Core Processes | Tokenization, parsing, morphological analysis, named entity recognition | Semantic analysis, sentiment analysis, context understanding, intent classification | Document structuring, sentence generation, summarization, style adjustment |

| Input Data | Raw text (words, sentences, grammar) | Structured data processed by NLP | Meaning data or intent models derived from NLU |

| Output Data | Syntactic information, tagging, language structure | Intent models, sentiment labels, semantic maps | Human-readable text, conversation, reports |

| Characteristics | Focus on grammar and structure | Focus on meaning and context | Focus on expression and generation |

| Representative Applications | Search engines, machine translation, speech recognition | Chatbots, Q&A systems, sentiment analysis | ChatGPT, report generation, automated content creation |

| Dependencies | Forms the foundation for NLU and NLG | Uses NLP output for understanding | Generates output based on NLU understanding |

| Result | Structured textual data | Data model reflecting understood intent | Natural, human-readable text |

5. Relationship Between NLP, NLU, and NLG

Although each technology is independent, their integration enables AI to conduct context-aware, natural, and accurate conversations. NLP structures the language, NLU interprets its meaning, and NLG expresses it as human-readable text—together forming the foundation of modern AI language understanding.

Natural Language Processing (NLP: Natural Language Processing) is a branch of artificial intelligence (AI) that enables computers to understand and interact with human language. By leveraging techniques such as tokenization, lemmatization, parsing, semantic analysis, and machine translation, NLP extracts meaning from unstructured text data and enables natural, bidirectional communication between humans and machines.

Modern NLP systems rely on three core technologies: NLP, NLU (Natural Language Understanding), and NLG (Natural Language Generation). NLP provides the foundation for processing language data, NLU interprets meaning, intent, and context, and NLG generates human-like expressions from the understood information.

By combining these technologies, conversational AI, virtual assistants, and chatbots can conduct natural and fluent interactions that consider context and user intent.

6. Limitations and Challenges of NLP, NLU, and NLG

While AI language technologies are rapidly evolving, they still face technical, linguistic, and operational limitations. Understanding these challenges is crucial for effective deployment and risk mitigation.

6.1 Limitations and Challenges of NLP

NLP forms the backbone of language understanding and analysis, yet several issues remain:

6.1.1 Difficulty in Contextual Understanding

NLP can analyze words and grammar but may struggle to accurately interpret context or intent. Irony, metaphors, subtle emotional cues, and implied meaning often pose risks for misinterpretation.

6.1.2 Multilingual and Cultural Challenges

Many NLP models are primarily designed for English. Processing other languages or culturally specific expressions can reduce translation and analysis accuracy due to differences in word order, grammar, and idiomatic usage.

6.1.3 Data Bias

NLP heavily depends on training data. If the data contains biases related to gender, race, or geography, outputs may reflect those biases, raising ethical concerns and requiring careful governance.

6.1.4 Domain-Specific and Long-Form Limitations

Specialized fields such as healthcare, law, and finance involve complex terminology and structures, which NLP may fail to process accurately. Similarly, long texts or intricate argumentation can exceed current model capabilities.

6.1.5 Resource and Cost Constraints

High-performance NLP models demand large datasets, significant computational power, and specialized expertise. Development and operational costs can be prohibitive, especially for small to medium-sized organizations.

6.2 Limitations and Challenges of NLU

Natural Language Understanding enables AI to comprehend the meaning of human language, but handling ambiguity and variability remains challenging:

6.2.1 Ambiguous Expressions

Human language contains polysemy, ellipsis, and figurative expressions. NLU attempts to infer meaning from context, but insufficient background knowledge can lead to misinterpretation.

6.2.2 Difficulty in Understanding Emotion and Intent

NLU models quantify emotions and speaker intent, making subtle nuances, sarcasm, or indirect speech hard to capture. This may result in unnatural or inappropriate responses.

6.2.3 Lack of Common Sense and Knowledge

Even large-scale NLU models do not inherently understand common sense or contextual knowledge. Logically correct outputs may still appear unnatural or misleading.

6.2.4 Domain and Cultural Accuracy

NLU performance depends heavily on training data. Specialized domains or different cultural contexts can reduce understanding accuracy, potentially leading to incorrect interpretations.

6.2.5 Model Transparency and Explainability

Deep learning-based NLU models are often "black boxes," making it difficult to explain why a particular interpretation was made. This raises trust and ethical concerns.

6.3 Limitations and Challenges of NLG

Natural Language Generation enhances efficiency in content creation but presents several challenges:

6.3.1 Language-Specific Nuances

NLG has been primarily developed for English. Generating text in multiple languages while preserving naturalness and cultural nuances requires language-specific optimization.

6.3.2 Creativity and Emotional Expression

While NLG excels at rule- and data-driven text, it struggles with creative or emotionally nuanced content. Narrative writing, humor, and marketing copy often still require human intervention.

6.3.3 Dependence on Data Quality

NLG performance depends on both the quantity and quality of training data. Biased or inaccurate data can compromise output reliability. High-quality, diverse datasets are essential.

6.3.4 Ethical and Social Risks

Generated content may contain misinformation, bias, or copyright violations. Fields such as healthcare, law, and journalism require human oversight and robust ethical guidelines.

6.3.5 Cost and Operational Burden

Advanced NLG systems incur high implementation, maintenance, and fine-tuning costs. Gradual, scalable adoption strategies are recommended for organizations with limited resources.

Conclusion

NLP, NLU, and NLG constitute the three pillars of AI language processing. NLP structures and analyzes language data, NLU interprets context and meaning, and NLG expresses information naturally and appropriately. Together, these technologies enable AI to produce fluent, human-like dialogue and advanced text generation.

These technologies already underpin daily and business applications such as search engines, chatbots, translation tools, and AI writing platforms. Behind AI’s ability to “understand, reason, and communicate” lies the seamless integration of these three layers, enabling sophisticated information processing.

FAQs

NLP (Natural Language Processing) is a technology that structures and analyzes language data, converting it into a format that computers can process. Through techniques such as morphological analysis, syntactic parsing, and named entity recognition, NLP extracts grammatical, lexical, and structural information from raw text.

NLU (Natural Language Understanding) builds on NLP-processed data to interpret context, intent, sentiment, and meaning. By performing tasks such as intent recognition, entity extraction, and contextual understanding, NLU captures the user’s requirements and the underlying meaning of information.

NLG (Natural Language Generation), in turn, transforms the information understood by NLU or structured data into natural, human-readable text. Through document structuring, sentence aggregation, grammatical application, and style adjustment, NLG generates outputs such as reports, chatbot responses, and articles.

In essence, NLP “reads,” NLU “understands,” and NLG “speaks.” The integration of these three components enables sophisticated, context-aware language processing.

To enable AI to converse naturally with people around the world, three layers of technology—NLP, NLU, and NLG—work seamlessly together.

It begins with Natural Language Processing (NLP). This layer breaks down the user’s input into smaller units, analyzes the structure of words and sentences, and converts it into a format that the AI can process. Think of it as giving the machine “eyes” to read and organize language.

Next comes Natural Language Understanding (NLU). Here, the AI interprets the meaning behind the words. It identifies the user’s intent, extracts important information such as names, dates, or locations, and analyzes context. This step ensures the AI doesn’t just see words—it understands what the user is asking, whether it’s checking the weather, booking a flight, or finding a local restaurant.

Finally, Natural Language Generation (NLG) takes over. Based on the AI’s understanding, NLG crafts responses that are natural, coherent, and appropriate for the context. For instance, if someone in New York asks, “What’s the weather in Tokyo tomorrow?”, the AI can reply: “Tomorrow in Tokyo, it will be sunny with a high of 25°C.” The same system can adapt seamlessly to other languages and cultural contexts, producing answers that feel natural whether the user is in Paris, Nairobi, or São Paulo.

Through this three-step process, AI moves beyond rigid, keyword-based responses. It can engage in continuous, meaningful conversations that feel intuitive and human-like, making it a truly global communication tool.

EN

EN JP

JP KR

KR

![[For Enterprises] Adoption Rate of Microsoft Copilot and 8 Key Business Use Cases](/sites/default/files/styles/medium/public/articles/%5BFor%20Enterprises%5D%20Copilot%20%E2%80%94%20Corporate%20Adoption%20Rate%20and%208%20Business%20Use%20Cases.png?itok=6MVSPst9)

![[For Enterprises] Grok — Corporate Adoption Rate and 8 Business Use Cases](/sites/default/files/styles/medium/public/articles/%5BFor%20Enterprises%5D%20Grok%20%E2%80%94%20Corporate%20Adoption%20Rate%20and%208%20Business%20Use%20Cases%20%281%29.png?itok=3Vu1lBCh)

![[For Enterprises] Claude — Corporate Adoption Rate and 8 Business Use Cases](/sites/default/files/styles/medium/public/articles/%5BFor%20Enterprises%5D%20Claude%20%E2%80%94%20Corporate%20Adoption%20Rate%20and%208%20Business%20Use%20Cases.png?itok=tc2aEIEt)